Identify top-tier AI vision models for free with the data-driven Open VLM Leaderboard. Hosted by OpenCompass on Hugging Face, this utility aggregates benchmarks like OCRBench to verify technical accuracy. Filter by niche skills to locate open-source alternatives that outperform $20/month subscriptions on tasks like document analysis.

Generate unlimited UI code and visuals for free by voting on top models in DesignArena. This visual benchmarking tool grants unrestricted access to premium engines like Claude 3.7 and GPT-5 Preview without a subscription. Optimized for developers needing instant copy-paste code, it serves as a zero-cost alternative to V0.dev for rapid prototyping.

I Love Free - The best free AI tools directory

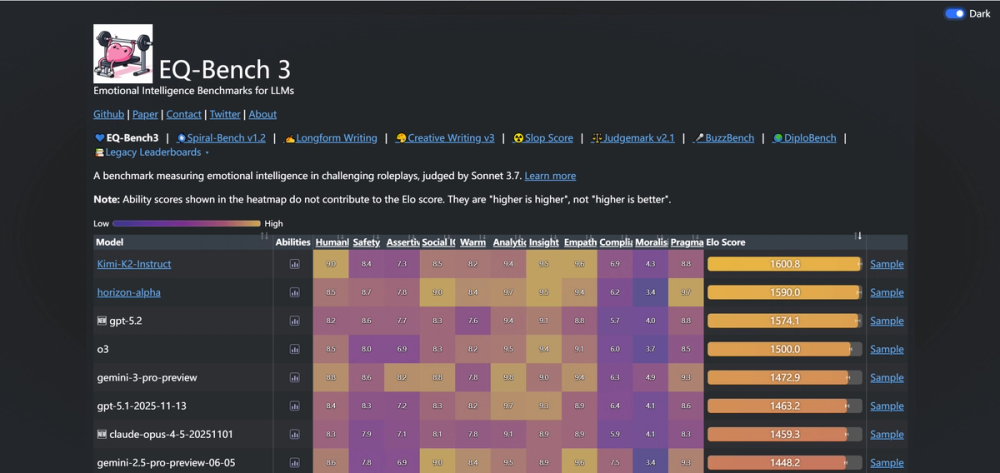

Access definitive AI emotional intelligence rankings for free with EQ-Bench. This open-source standard provides unlimited leaderboard access to compare model empathy and insight without hidden subscriptions. Select the optimal AI for creative writing or therapy scenarios by analyzing performance in rigorous conflict simulations.

Solve complex algebra and calculus proofs for free with the multi-model consensus engine of MathArena. Cross-reference top-tier models like OpenAI o1 and Gemini to virtually eliminate hallucinations. The platform targets students and engineers with 10 deep reasoning queries daily at $0, offering a precise, verified alternative to the $20/month ChatGPT subscription.

Benchmark top AI models against trick questions to expose reasoning failures for free using Simple Bench. Filter model errors via the free "Council" app to secure consensus answers, ideal for developers validating Pro plan ROI. Unlike subjective leaderboards, this utility provides falsifiable data to authenticate LLM logic before you subscribe.

Assess model reasoning via live game replays and dynamic Elo ratings for free with AI Elo. Access unlimited match histories and objective hierarchies at no cost to bypass the subjectivity of LMSYS. Optimized for validating agentic behavior, this tool delivers real-time performance metrics based on verifiable logic wins rather than static memorization.